The May edition of our “Advanced In the Wild Malware Test” reveals a different approach to applying security to Windows 10 by developers that design protection software. In our study that is complaint with MITRE tactics and techniques, we have analyzed 11 solutions that protect network devices. The test lasting uninterruptedly for the whole month, 24 hours a day, was possible to carry out thanks to a programmed system that performs tedious calculations and actions in the Windows system, automating the entire test procedure (aggregating and analyzing logs, giving a final verdict). The design and operation of this system are described in this article and in the methodology.

In the test, we considered these program versions that have been suggested by developers. Here is the full list of tested solutions (in alphabetical order):

- Avast Free Antivirus

- Avira Antivirus Pro

- Bitdefender Total Security

- Comodo Advanced Endpoint Protection

- Comodo Internet Security

- Emsisoft Business Security

- G DATA Total Security

- Kaspersky Total Security

- Microsoft Defender

- SecureAPlus Pro

- Webroot Antivirus

The products and Windows 10 settings: daily test cycle

Tests are carried out in Windows 10 Pro x64. The user account control (UAC) is disabled because the purpose of the tests is to check the protection effectiveness of a product against malware, and not a reaction of the testing system to Windows messages. Other Windows settings remain unchanged.

The Windows 10 system contains installed the following software: office suite, document browser, email client, and other tools and files that give the impression of a normal working environment.

Automatic updates of the Windows 10 system are disabled in each month of the tests. Due to the possibility of a malfunction, Windows 10 is updated every few weeks under close supervision.

Security products are updated one time within a day. Before tests are run, virus databases and protection product files are updated. This means that the latest versions of protection products are tested every day. All antivirus applications had access to the Internet during the tests.

Malicious software

In May, we used 1549 malware samples for the test, consisting of, among others, banking trojans, ransomware, backdoors, downloaders, and macro viruses. In the contrast to well-known testing institutions, our tests are much more transparent – we share to the public the full list of harmful software samples.

VirusTotal vs real working environment

We use real working environments of Windows 10 in a graphic mode, that is why the results of individual samples may differ from those presented by the VirusTotal service. We point that out because inquisitive users may compare our tests with the scan results on the VirusTotal website. It turns out that differences between real products installed on Windows 10 and scan engines on VirusTotal are significant.

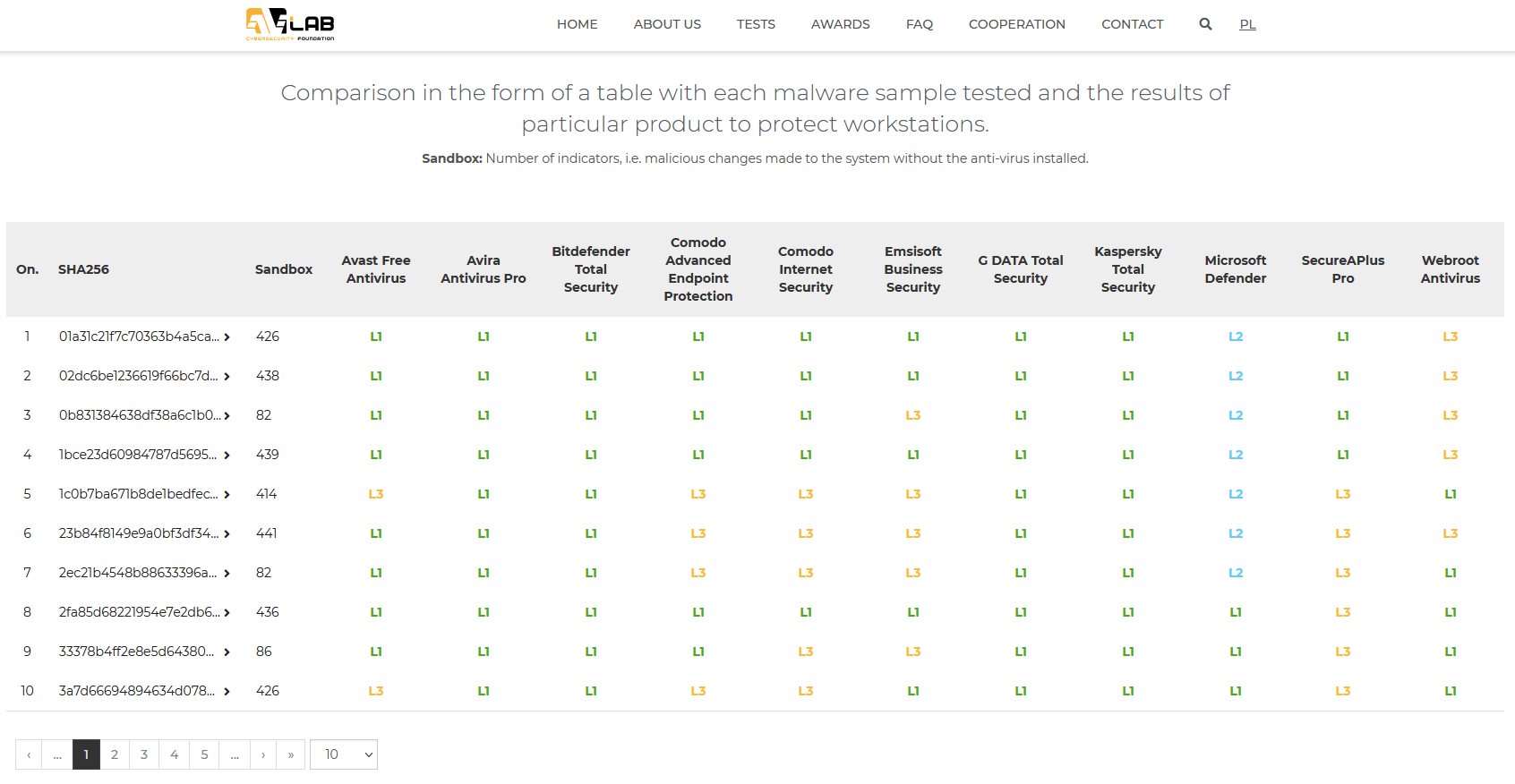

Levels of blocking malicious software samples

We share checksums of malicious software for researchers and security enthusiasts by dividing them into protection technologies that have contributed to detect and stop a threat. According to independent experts, this type of innovative approach of comparing security will contribute to better understand differences between products available on the market.

Each malware sample blocked by tested protection solution has been divided into few levels:

- Level 1 (L1): The browser level, i.e., a virus has been stopped before or after it has been downloaded onto a hard drive.

- Level 2 (L2): The system level, i.e., a virus has been downloaded, but it has not been allowed to run.

- Level 3 (L3): The analysis level, i.e., a virus has been run and blocked by a tested product.

- Failure (F): The failure, i.e., a virus has not been blocked and it has infected a system.

The latest results of blocking each sample are available at https://avlab.pl/en/recent-results/ in a table:

May 2021 – a comment from AVLab Cybersecurity Foundation

Let us point out that in this edition of the test all solutions achieved the maximum result in blocking threats. We do not give negative points for detecting and blocking a false positive (after launching it), and positive points for early blocking malware (right after opening a link in a browser).

Protection technologies in the tested software work as indented by developer, so they may not have a scanner for URL addresses and downloaded files in real time. If a developer did not consider a protection against phishing websites or webpages with malicious content, we do not find a reason to giving him negative points. In addition, such security solutions may have better blocking capabilities but once a file is saved on a disk.

For the reasons mentioned above, we do not want to favor any security suite, but to reveal the difference between e.g., SecureAPlus Pro and Kasperky Total Security. The solution from Singapore can easily compete with Russian software in our “Advanced In The Wild Malware Test” in which we show the differences in blocking threats on selected levels.

In some cases, differences between preventive and behavioral technologies in products are so significant that we had to show them in a table, and mark them accordingly with the L1, L2, L3 levels.

We want to thank all interested developers for their quick response to the reported technical details, and assistance in seamless automation of our tests and their antivirus products.